1Ewha Womans University, Korea kim.sujeong@ewhain.net kimy@ewha.ac.kr 2INRIA Rhone-Alpes, France stephane.redon@inria.fr |

Abstract

![]()

We propose a method for view-dependent simplification of articulated-body dynamics,

which enables an automatic trade-off between visual precision and computational

efficiency. We begin by discussing the problem of simplifying the simulation based on

visual criteria, and show that it raises a number of challenging questions. We then focus

on articulated-body dynamics simulation, and propose a semi-predictive approach

which relies on a combination of exact, a priori error metrics computations, and visibility

estimations. We suggest several variants of semi-predictive metrics based on

hierarchical data structures and the use of graphics hardware, and discuss their relative

merits in terms of computational efficiency and precision. Finally, we present several

benchmarks and demonstrate how our view-dependent articulated-body dynamics

method allows an animator (or a physics engine) to finely tune the visual quality and

obtain potentially significant speed-ups during interactive or off-line simulations.

Key Words

![]()

dynamics, kinematics, level-of-detail, simulation, articulated bodies

Full Text

Download Video

![]()

Benchmark Scenario |

Video |

Swinging Pendulum |

|

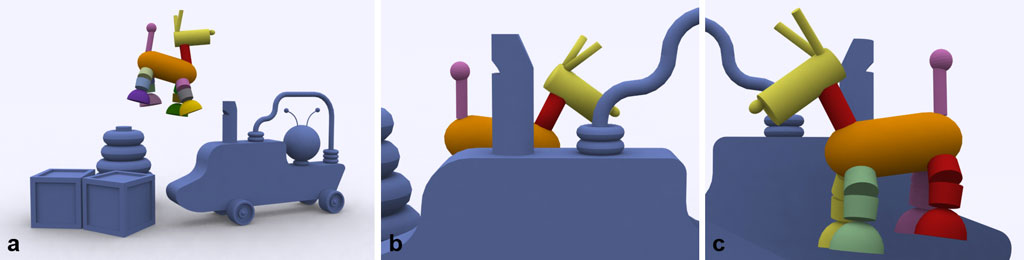

Haptic-enabled Dog Puppet |

|

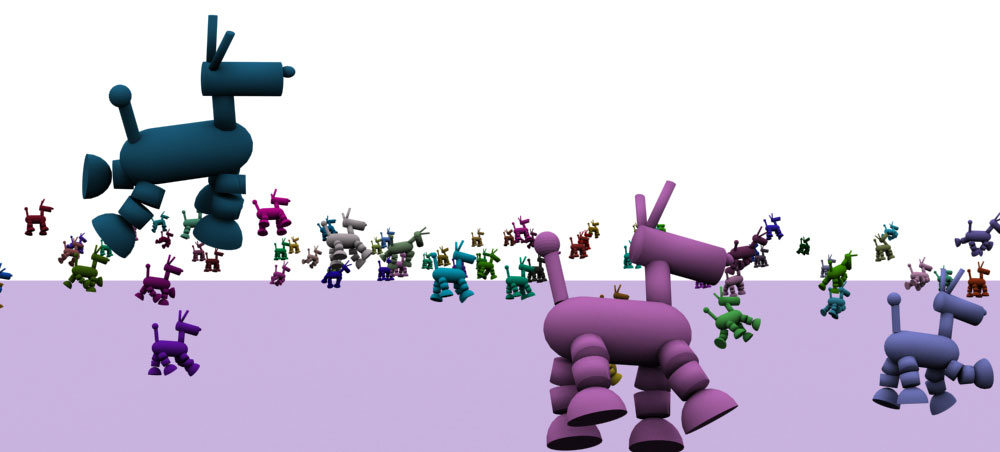

Hanging Toy Dogs |

|

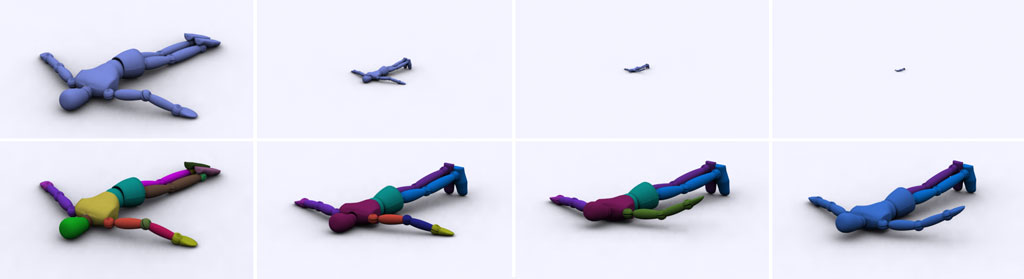

Falling Character |

|

Comparions between BVH- and OQ- based Methods |

(Video Codec: DivX 6.0)

Benchmarking Scenarios

![]()

Swinging Pendulum |

|

|

Haptic-enabled Dog Puppet |

|

|

Hanging Toy Dogs |

|

|

Falling Character |

|

|

Links to Relevant Research

![]()

Continuous Collision Detection for Adaptive Simulations of Articulated Bodies project page

by Sujeong Kim, Stephane Redon, Young J. Kim

Adaptive Dynamics of Articulated Bodies project page

by Stephane Redon, Nico Galoppo and Ming C. Lin

Fast Continuous Collision Detection for Articulated Models project pageby Stephane Redon, Young J. Kim, Ming C. Lin, Dinesh Manocha

Copyright 2006 Computer Graphics Laboratory

Dept of Computer Science & Engineering

Ewha Womans University, Seoul, Korea